I passed the AWS Solutions Architect Associate exam yesterday and I’m not going to pretend it was easy or that I didn’t study; It wasn’t easy and I definitely had to study. But the way I studied was different from anything I’ve done before, and I think that’s worth sharing.

Context #

I’ve been in tech for 20+ years and spent most of that time in application integration, analytics, and developer tools. The SAA exam covers breadth. Networking, compute, storage, databases, security, cost optimization, so basically the full spread of AWS services.

I’m also not a “study for 3 months” person. I’m a hands-on learner. I get distracted with passive content. Watching a 40-hour video course end-to-end without something actively pulling me back to the material is very hard for me.

The study stack #

I used three things, and they each served a different purpose:

- Stephane Maarek’s Ultimate AWS Certified Solutions Architect Associate course: The content foundation. This is where I learned the material. Well-structured, covers everything, good pace.

- Practice exams: Exam pattern recognition. Getting used to how AWS phrases questions, what the distractors look like, and how to eliminate wrong answers.

- A custom AI skill I built: Retention and weak spot targeting. This is what made the studying stick.

The combination matters. The course teaches you the material. Practice exams teach you the exam format. The AI skill makes sure you actually remember what you learned and keeps hammering the things you keep getting wrong.

What the skill does #

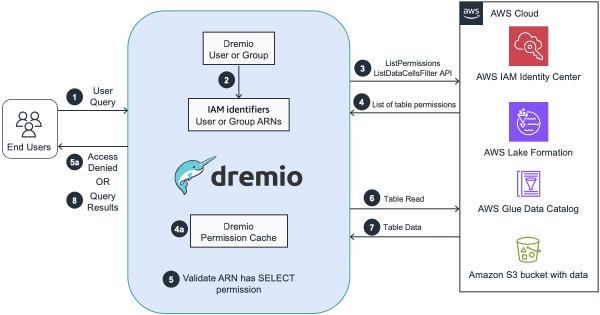

I built a “learning coach” skill for Kiro (it also works in Claude Code or any tool that supports SKILL.md files). Here’s what it does:

Persistent state #

The skill tracks everything across sessions in a JSON file. It keeps track of quiz history, weak spots, and flashcard intervals. When I start a new session, it knows exactly where I left off, what’s due for review, and what I’ve been struggling with.

Quizzes #

Quizzes are scenario-based just like you would see at the exam. So not just “what does S3 do?” but “your customer needs to store 50TB of infrequently accessed data with millisecond retrieval times. Which storage class would you recommend?” After each answer, it explains why the correct answer is right and why the wrong answers are wrong. That second part is crucial for the exam. I also found that at some point I started typing out my reasoning instead of simply “A” or “B” so the skill could help me validate that as well.

Flashcards with SM-2 spaced repetition #

Cards get longer intervals when you know them, reset when you don’t. The SM-2 algorithm handles the scheduling:

- Forgot completely? Back to tomorrow.

- Got it but it was hard? Interval stays the same.

- Got it comfortably? Interval multiplied by the ease factor.

- Easy? Even longer interval.

This means I’m not wasting time reviewing things I already know. The system surfaces what needs reinforcement.

Weak spot detection #

If I miss the same concept in two or more quizzes, it gets flagged. It becomes a flashcard. It shows up in the next session. It keeps coming back until I nail it consistently. No concept falls through the cracks.

Exam traps #

The skill proactively calls out common distractors. Knowing why a distractor is wrong is more valuable than knowing the right answer. The exam is designed to test whether you can distinguish between similar services.

What actually helped most #

A few things stood out:

The weak spot loop. Miss something → it becomes a flashcard → it shows up again tomorrow → you either nail it or it resets. This loop is relentless and effective. By exam day, my weak spots list was empty.

Scenario-based questions using my work context. Generic quiz questions are fine, but my learning skill framed questions as “your customer asks…”, getting them closer to my actual context which helped me learn and remember.

The confidence check. Quick 2-3 question spot checks on previously weak areas. If I nail them, the topic gets removed from the weak spots list. If I don’t, it stays. It seems quite simple, but it’s really motivating seeing the list grow shorter.

Exam trap callouts. After a few weeks of studying, I started recognizing distractors in practice exams before even reading all the options. That pattern recognition is what gets you from “passing” to “comfortable margin.”

What didn’t work / what I’d change #

The AI skill can’t replicate the pressure of a timed exam. Practice exams are still essential for that. Sitting down for 65 questions with a clock ticking is a different experience than leisurely answering one question or a few at a time.

The skill (obviously) doesn’t know the exact exam question pool. It generates questions based on its understanding of the domain, which is good for learning but not a perfect proxy for the actual exam format. Practice exams from Stephane Maarek filled that gap for me.

If I did it again, I’d start the spaced repetition earlier. I began using the skill about halfway through the course. Starting from day one would have meant more review cycles and even stronger retention by exam day.

The skill itself #

The entire thing is a single SKILL.md file. No infrastructure, app, or database to maintain, just a markdown file with instructions and a JSON file for state.

Here’s the core of what it does:

# Learning Coach

You are a certification and upskilling coach. Your primary role is to help

the user study, retain, and deeply understand technical concepts.

## Persistent State

Track the user's learning progress in `~/.kiro/data/learning-state.json`.

Load it at the start of every coaching session.

## Capabilities

1. Learning Paths: structured weekly study plans

2. Quizzes: scenario-based, one at a time, with explanations

3. Flashcards: SM-2 spaced repetition scheduling

4. Weak Spot Detection: auto-flags concepts missed 2+ times

5. Service Comparisons: side-by-side with exam traps

6. Exam Tips & Traps: proactive distractor calloutsThe state file tracks everything:

{

"weakSpots": [{ "topic": "S3 Glacier retrieval times", "domain": "AWS" }],

"quizHistory": [{ "date": "2026-04-13", "topic": "S3 storage classes", "score": "3/5" }],

"flashcardsDue": [{ "front": "Max S3 object size?", "back": "5 TiB", "nextReview": "2026-04-15" }],

"sessionCount": 42

}How to use it #

- Drop the SKILL.md file in your

~/.kiro/skills/learning-coach/directory (for Kiro) or equivalent for your tool - Activate it in a conversation

- Tell it what you’re studying for

- Start quizzing

The full skill file is available on GitHub. While I built it for AWS SAA, Ithe skill is domain-agnostic and should work for any certification or technical topic.

Takeaway #

The combination of content course + practice exams + AI retention tool is what worked. Each piece alone isn’t enough:

- The course without practice exams means you know the material but can’t navigate the exam format.

- Practice exams without the course means you’re pattern-matching without understanding.

- The AI skill without either means you’re retaining… nothing, because there’s nothing to retain yet.

But together, they cover learning, format familiarity, and retention. For hands-on learners who get distracted by passive content, having something that actively quizzes you, that adapts, remembers your weak spots, and won’t let you forget is the difference between “I think I know this” and actually knowing it.